Solutions Chapter Group's Elite and Proven MLOps Model with AWS SageMaker

In today's hyper-competitive landscape, having a powerful AI model is like having a world-class symphony orchestra. It’s filled with incredible potential. But without a conductor to lead them, a score to follow, and a stage to perform on, all you have is a room full of talented musicians making noise. The result is chaos, not a masterpiece. This is a challenge many companies face with their artificial intelligence initiatives.

This is the hidden challenge of enterprise AI. Many companies now have access to amazing generative AI models, but they lack the operational discipline to manage them. Their machine learning projects become a chaotic mix of different tools, inconsistent data pipelines, and models that are difficult to track, update, or govern. This lack of structure hinders their ability to scale their machine learning efforts.

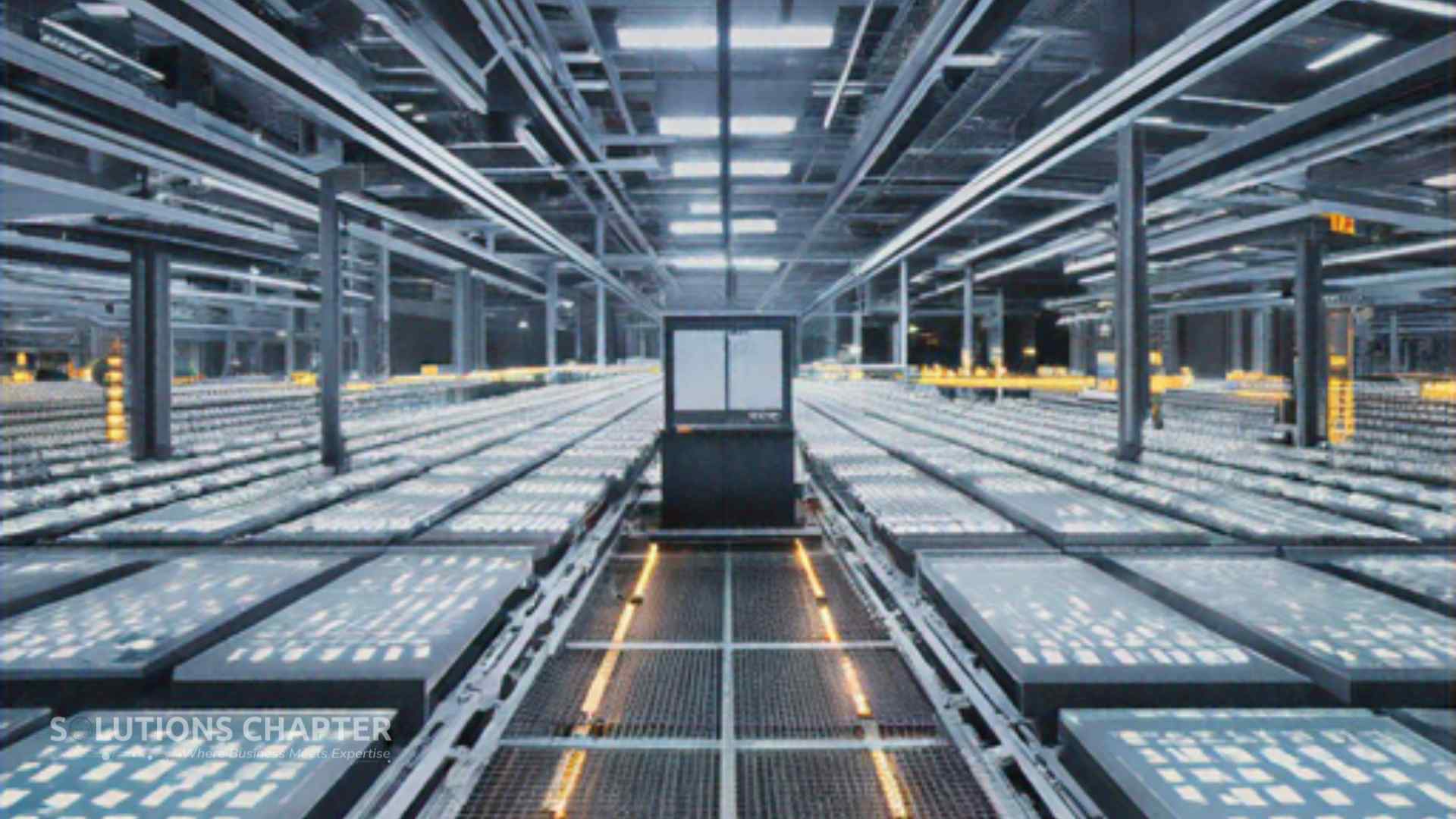

At Solutions Chapter Group, we believe that building great AI requires more than just great models; it requires a great factory. In our strategic partnership with the master builders at StraStan Solutions Corp., we have built what we call "The AI Foundry" an elite and proven MLOps (Machine Learning Operations) model designed to forge high-quality, enterprise-grade AI applications with speed, consistency, and precision. The Amazon platform offers many services to make this possible.

The heart of this foundry, the conductor of our AI orchestra, is AWS SageMaker. Our Sagemaker AI model is central to our success. This is an inside look at how our disciplined MLOps model, powered by AWS SageMaker, allows us to manage a diverse portfolio of AI applications, leverage the best creative tools, and strategically integrate specialized platforms to build the future of intelligent enterprise solutions. We rely on Amazon services for this critical work. Our Sagemaker AI platform is a core part of our value proposition.

Forging the Future: A Look at StraStan's Four New AI-Powered Applications

An MLOps model is only as good as the products it can create. Our AI Foundry, orchestrated by AWS SageMaker, is the engine that enables our technology partner StraStan to build and manage a diverse suite of powerful new web applications, each with its own unique set of machine learning models and data requirements. Every Sagemaker AI project we undertake is managed with precision.

Our portfolio of applications demonstrates the breadth of what a disciplined MLOps process can handle. With Tour With Ease, we are transforming the travel industry by using complex models to analyze flight data, hotel pricing, and offer personalized travel inspiration. This requires managing massive amounts of real-time data and a variety of predictive models. The data analytics are crucial. The machine learning models need constant training with fresh data.

With Sosyal Genius, we are empowering businesses to master their social media with generative AI that creates compelling text and images, and even automates posting schedules. This project involves managing creative models and complex, event-driven workflows. The amount of data processed is immense. The machine learning models for this use case are highly specialized.

Our IDP Platform is revolutionizing document management for the enterprise, using AI to intelligently extract data from images and scanned documents. This requires a focus on high-security data processing and models that understand the structure of complex text. The machine learning models here are trained on vast amounts of document data. The Sagemaker AI platform ensures these processes are secure.

Each of these is a major machine learning project. Managing the code, training data, and deployed models for any one of these applications would be a challenge. Managing all of them simultaneously, ensuring high quality and consistent performance, would be impossible without a central, powerful MLOps platform. AWS SageMaker provides that essential, unified control plane, allowing our developers to oversee every project from a single location, regardless of the specific use case. The Amazon Sagemaker AI platform brings order to the complexity of modern machine learning. It gives us access to a number of built-in ML algorithms that speed up model development. The built-in governance features of Sagemaker AI are critical for our enterprise clients. We have access to the best Amazon services.

The Spark of Creation: How AWS Bedrock Fuels Our Foundational Models

Every great foundry needs high-quality raw materials. In our AI Foundry, the "spark of creation" for our generative AI applications comes from AWS Bedrock. Think of AWS Bedrock as a curated library of the world's most powerful, foundational AI models. It gives our developers secure, API-based access to an incredible range of tools from industry leaders, all through Amazon services.

This platform allows us to experiment with and deploy a variety of high-quality models without having to build them from the ground up. For a project that requires a sophisticated neural network, we might use a specific model from the Amazon Titan family. For another use case focused on creative text generation, we might use a different N model. AWS Bedrock provides a secure and managed environment to access all of these resources, with full support from Amazon. This access is key to our generative AI strategy.

This is a critical part of our design philosophy. We use AWS Bedrock to provide the foundational generative AI capabilities for applications like Sosyal Genius. When a user needs to generate social media content, we can use a powerful model via Bedrock to create engaging text or even draft ideas for images. For our IDP Platform, we can use these models to take extracted data and enhance it, turning raw text into professionally formatted content. The generative AI models are essential.

The true power comes when we combine these foundational models with our own data. We can take a powerful, pre-trained model and fine-tune it with specific data to specialize it for a particular task. This training process creates a new, custom model that is perfectly suited to our needs. AWS SageMaker then provides the tools to manage the entire lifecycle of these custom models, from training and evaluation to deployment and monitoring. The Sagemaker AI platform offers robust support for this model development. Bedrock provides the spark, and AWS SageMaker provides the factory floor to turn that spark into a finished, high-quality product. The training data is managed securely by Amazon services. The machine learning models become more powerful with each training cycle.

Beyond the Factory: Floor Integrating GCP Vertex AI for Specialized Analytics

A world-class foundry is efficient, but it's also smart. It knows when to use its own heavy machinery and when to bring in a specialist with a precision tool for a delicate task. While AWS SageMaker is our factory floor for MLOps, our multi-cloud strategy empowers us to look "beyond the factory floor" and integrate specialized platforms where they offer a distinct advantage. Our Sagemaker AI model can even manage these integrations.

This is where GCP Vertex AI comes into our ecosystem. Through our participation in the Google Cloud Startup Program, we've gained deep access to the Google Vertex platform. After extensive testing, we've found that the Google Gemini models, particularly when accessed and fine-tuned on GCP Vertex AI, are exceptionally good at a very specific task analyzing unstructured text to predict user intent. This is a subtle but incredibly valuable machine learning capability that provides deep data analytics.

Let's consider a practical project. In Tour With Ease \[\[9.1\]\], we want to predict a user's travel style. This use case requires sophisticated data analytics. A user searching for "cheap hotels in Bangkok" has a very different intent than someone searching for "luxury resorts in Bangkok for a honeymoon." Our process for this specialized task uses a third-party service, managed under our primary Amazon umbrella.

This entire workflow for this specific use case is managed within GCP. However, the governance and monitoring of the project still fall under our primary MLOps umbrella managed by AWS SageMaker. The Sagemaker AI platform tracks the performance of the API calls to the deployed model on Google Vertex, ensuring that our specialist tool is working in perfect harmony with the rest of our AWS-based applications. The built-in governance of Sagemaker AI provides this oversight.

This is the essence of a sophisticated, multi-cloud machine learning strategy. It’s not about choosing AWS or Google. It's about using AWS SageMaker to build a robust, enterprise-grade AI factory, and then using that stable platform as a base to strategically integrate the best-in-class tools from other platforms like GCP Vertex AI to handle the most specialized and demanding analytics tasks. The Sagemaker AI platform supports frameworks like Apache MXNet, giving us even more flexibility. This approach allows us to use a number of built-in ML algorithms from different providers. The support from Amazon for these kinds of integrations is excellent.

The Power of a Disciplined MLOps Model

Building one AI model is a science experiment. Building an entire portfolio of scalable, secure, and reliable AI applications is an engineering discipline. The difference between the two is a powerful and proven MLOps model. The machine learning processes must be robust.

At Solutions Chapter Group, our AI Foundry, orchestrated by AWS SageMaker, provides the discipline and structure we need to innovate with confidence. The Sagemaker AI platform allows us to manage the entire lifecycle of our machine learning models, from the creative spark of AWS Bedrock to the operational excellence of our deployment pipelines, where we can perform real-time inference. The sheer number of built-in ML features in the Amazon ecosystem provides unparalleled support.

More importantly, this strong foundation gives us the strategic freedom to be intelligent consumers of technology. It empowers us to look beyond a single platform and integrate the best specialist services, like GCP Vertex AI, to add layers of precision and power to our applications. This is how we build for a decade forward. This is how we forge the future with the best that Amazon and other machine learning leaders have to offer. Our generative AI models are second to none because of this approach.

Are you ready to build your AI future on a foundation of excellence?

- To partner with the master builders who can turn your vision into a suite of powerful, well-managed AI applications, we invite you to contact StraStan Solutions Corp. at www.strastan.com.

- To engage with the strategic architects who design and lead elite, future-proof technology ventures, and to explore investment or partnership opportunities, we encourage you to connect with Solutions Chapter Group at www.solutionschapter.com.

Comments & Feedback

No comments yet. Be the first to share your thoughts!